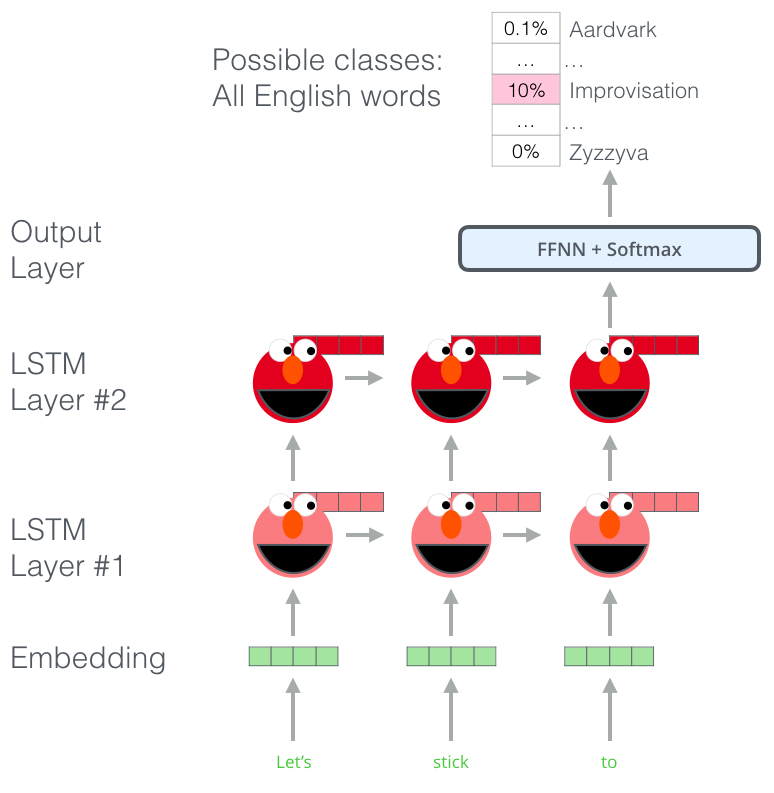

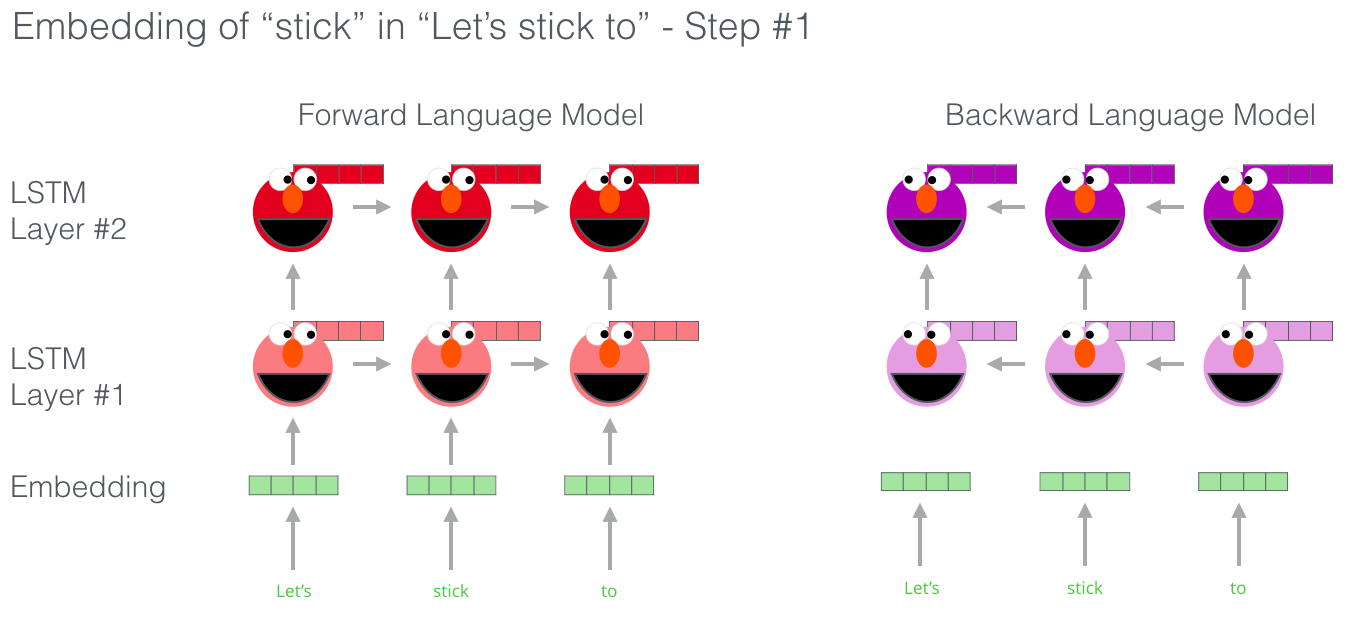

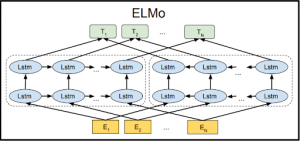

Matthew Peters on Twitter: "Our paper "Deep contextualized word representations" is now on Arxiv. ELMo representations from pre-trained language models set new SOTA for 6 diverse NLP tasks, SQuAD, SNLI, SRL, coref,

How the vocab_to_id mapping is decided in ELMo without an explicit mapping file? · Issue #3318 · allenai/allennlp · GitHub